Introduction

In May we (WhatUsersDo http://www.whatusersdo.com/) were invited by NUX (Northern User Experience) to run two workshops in Leeds and Manchester exploring how User Experience and Digital Professionals select the right usability testing methodology and tools (given a series of challenging research objectives). (Original post about event) As well as being fun the workshop proved really insightful – seeing first hand how and why professionals select one method over another lead us to conclude:

– very few people have real experience of the methodologies available

– (as a result of the first point) misconceptions abound around the suitability of many approaches

– lab-based testing scored relatively poorly (there’s a league table below) given its maturity.

About NUX

NUX events are run regularly and give UX professionals the opportunity to network and share ideas.

You can find details of NUX here: https://nuxuk.org/

Many thanks to Code ComputerLove (http://www.codecomputerlove.com/) in Manchester and Simple Usability (http://www.simpleusability.com/) in Leeds for hosting the events and the excellent facilities and refreshments that they provided. We were very grateful to be asked to run the workshops.

How we did it

The events usually have a speaker or two on a relevant topic and our aim was to deliver interactive sessions which gave people the space to share their experiences of usability testing. First we did a short presentation on the What Users Do remote video method and other similar methods that are available. This prompted a whole group discussion on who had used what methods, how often and what their experiences were.

Having got people thinking we split the audience into small groups and gave them usability testing scenarios which were challenging both in terms of the product for testing and the user group. For example a sexual health website aimed at teenagers, an ecommerce site with users around the world in different language and cultural groups, a smarthphone app to engage NEETS for training/job search.

Fig 1 Testing Methodologies Discussed

Each group was asked to discuss a range of testing methods and then to choose only one and give us some pros and cons for their chosen method. There was much lively debate in the groups and here are the results in table format:

Table 1 Testing Method Discussion Summary

| Method | Pros | Cons | Votes |

| Remote with video (e.g. WUD) |

|

|

4 |

| Remote with analytics (e.g. User zoom) |

|

1 | |

| Onsite survey |

|

1 | |

| Ethnography |

|

|

1 |

| Basic Laboratory |

|

|

1 |

Fig 2 Photo of attendees hard at work on the testing challenges

Market Insight

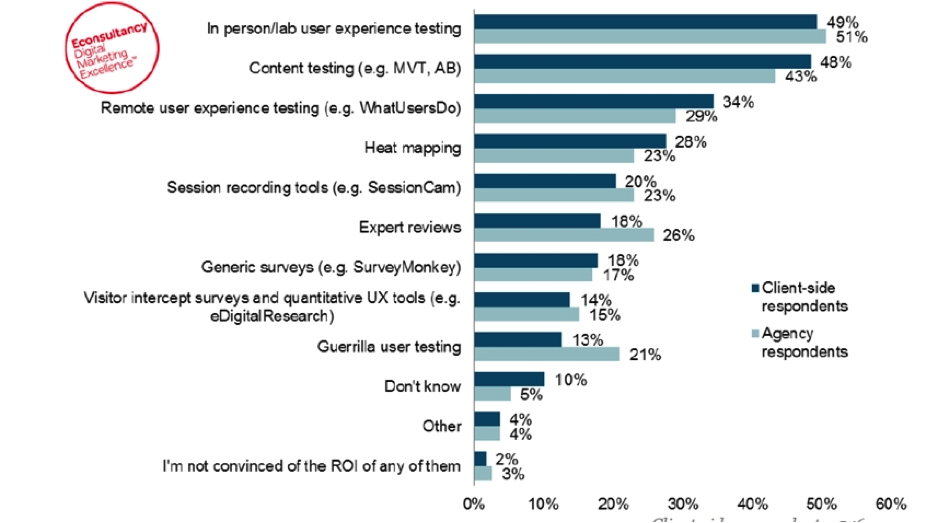

After the feedback session we presented some results from a recent survey that WhatUsersDo ran with Econsultancy on usability testing in a range of organisations including web agencies and clients. The big revelation was this paradox:

- 78% committed to delivering the best UX

But

- Less than half do any UX research or testing

- 62% base design decision on hunches & opinion

How can you deliver the best UX if you don’t do UX research/testing and still mostly base your designs on guesses?

Fig 3 Table of Econsultancy Survey on Usability Testing

Reflections on the events

Only one group went for the traditional usability laboratory, and the basic laboratory “lab in a bag” version at that, rather than an advanced laboratory with eye tracking. The general view was that they are expensive, inflexible, intrusive and resource intensive.

The main issues for us were:

- The knowledge of UX testing methods ranged from the very detailed to the patchy. Not one person knew about every method or had used every method. (This fact was also true of the presenters).

- A lot of assumptions were made about some methods as to when and why they were suitable or how they could be used. Some of these assumptions were wrong e.g. that remote testing can only be used at the end of development when in fact it can be used from the very start with concepts and prototypes.

- Some people saw these methods as being just about testing whereas others recognised that really many of them are about UX research ‘in the round’ and can be used flexibly throughout the user centred design process to get user insight.

- Some groups when looking at testing methods thought more about users’ needs and characteristics.

- For the other groups the logistics and technical aspects of doing the test was the main concern.

- Not many had used guerrilla methods which is odd give the amount of current literature and UX blog posts about the power of guerrilla to give cost effective UX research.

- The usability testing market is still an open market; there are many potential clients who need educating in the value of testing per se and in the various merits and applications of different methods.

- There were many different views on the value of different types of data and the confidence with which design conclusions could be drawn from it. Many were fearful of client’s reaction to small sample sizes.

- All had issues with clients in persuading to resource testing once, never mind several times throughout the design and development process. (So clients won’t pay for small user samples to be tested never mind large ones).

A question that this last point raises is how many people are really doing user centred design if users are being left out of the process once a persona is generated? If personas are from off the shelf marketing models or often are just the hunches of clients and development teams then without real users in the process (even just as test guinea pigs) do we really have user centred design in the UX industry at all? Has the term user centred design just become a marketing label?

Given that in the Econsultancy survey that 78% said that they took UX seriously but less than 50% said that they did any usability testing sadly this seems to be the case. But that discussion is for another blog – When is User Centred Design actually User Centered Design.